Decorating Your Home with ARKit

The last several years have led to great advancements in the world of augmented reality. From Google Glass to the Microsoft Hololens to Pokémon Go, augmented reality has advanced to become a new and exciting technology that many people are beginning to take advantage of. With the release of ARKit, Apple has made it easier than ever to incorporate augmented reality into your own app. Using the device's camera as a viewport, ARKit allows you to superimpose 2D and 3D objects into the real world. Using SpriteKit, you can layer 2-dimensional objects over objects in the real world and using SceneKit, you can place 3-dimensional objects in real space.

In this tutorial we are going to use ARKit and SceneKit to place and rearrange furniture in a room. Using a model we download from Turbosquid (feel free to use your own resources if desired), we are going to create an app that allows the user to place and rearrange a piece of furniture in the room.

Getting Started

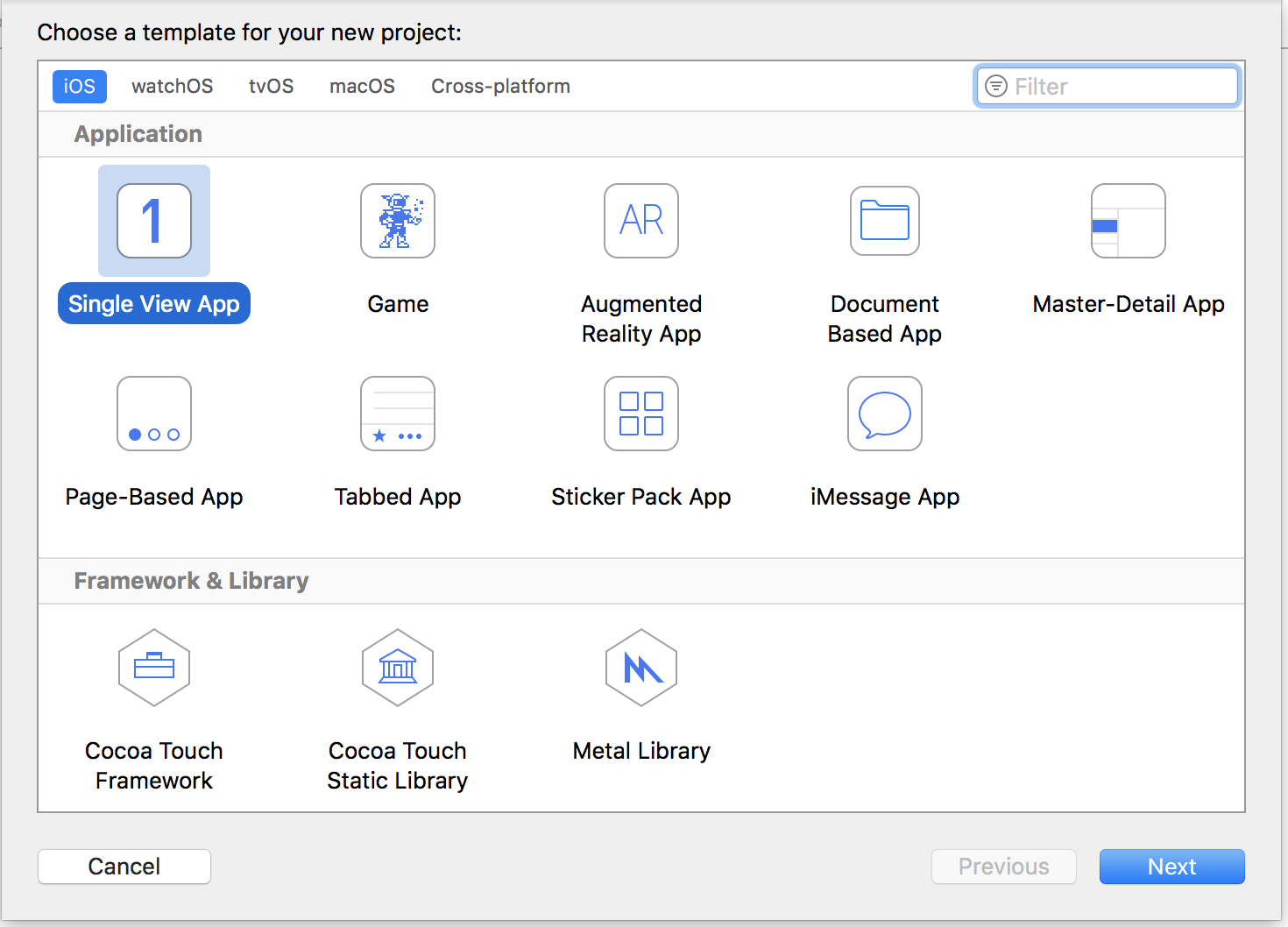

To begin, we are going to create a single-view iOS application. Although we could use Apple's ARKit template starter, building from scratch will give us a better understanding of the inner workings of ARKit. Let's call this project ARRefurnishing.

Incorporating ARKit

Now we can dig in and start configuring our app to use ARKit. Open up the ViewController.swift file. We're going to add some code to establish an ARSession, which does all of the hard work for us. First, we need to add import ARKit to the top of the file so that we have access to all of the classes that we need. Then we need to set up an IBOutlet to an ARSCNView, and create variables for our ARSession, as well as some configuration settings that go along with it.

@IBOutlet var sceneView: ARSCNView!

let session = ARSession()

let sessionConfiguration: ARWorldTrackingConfiguration = {

let config = ARWorldTrackingConfiguration()

config.planeDetection = .horizontal

return config

}()

This configuration tells the session to report all horizontal places (floors, table tops, roads, etc.) so that we have a reference for where to place our objects. Now in viewDidLoad we want to setup the session.

override func viewDidLoad() {

super.viewDidLoad()

// Report updates to the view controller

sceneView.delegate = self

// Use the session that we created

sceneView.session = session

// Use the default lighting so that our objects are illuminated

sceneView.automaticallyUpdatesLighting = true

sceneView.autoenablesDefaultLighting = true

// Update at 60 frames per second (recommended by Apple)

sceneView.preferredFramesPerSecond = 60

}

We also need to add methods to viewWillAppear and viewWillDisappear to make sure that our session is only running while the view is visible to the user.

override func viewWillAppear(_ animated: Bool) {

super.viewWillAppear(animated)

// Make sure that ARKit is supported

if ARWorldTrackingConfiguration.isSupported {

session.run(sessionConfiguration, options: [.removeExistingAnchors, .resetTracking])

} else {

print("Sorry, your device doesn't support ARKit")

}

}

override func viewWillDisappear(_ animated: Bool) {

// Pause ARKit while the view is gone

session.pause()

super.viewWillDisappear(animated)

}

Now Xcode will most likely be giving you an error that ViewController does not implement ARSCNViewDelegate. Let's fix that.

extension ViewController: ARSCNViewDelegate {

func renderer(_ renderer: SCNSceneRenderer, didAdd node: SCNNode, for anchor: ARAnchor) {

// Reports that a new anchor has been added to the scene

}

func renderer(_ renderer: SCNSceneRenderer, updateAtTime time: TimeInterval) {

// Reports that the scene is being updated

}

}

We will come back to these methods later; for now we will leave them blank to remove our error.

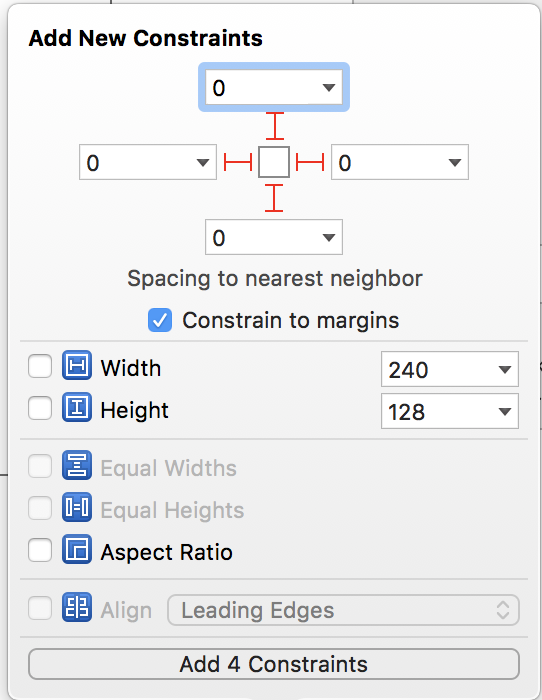

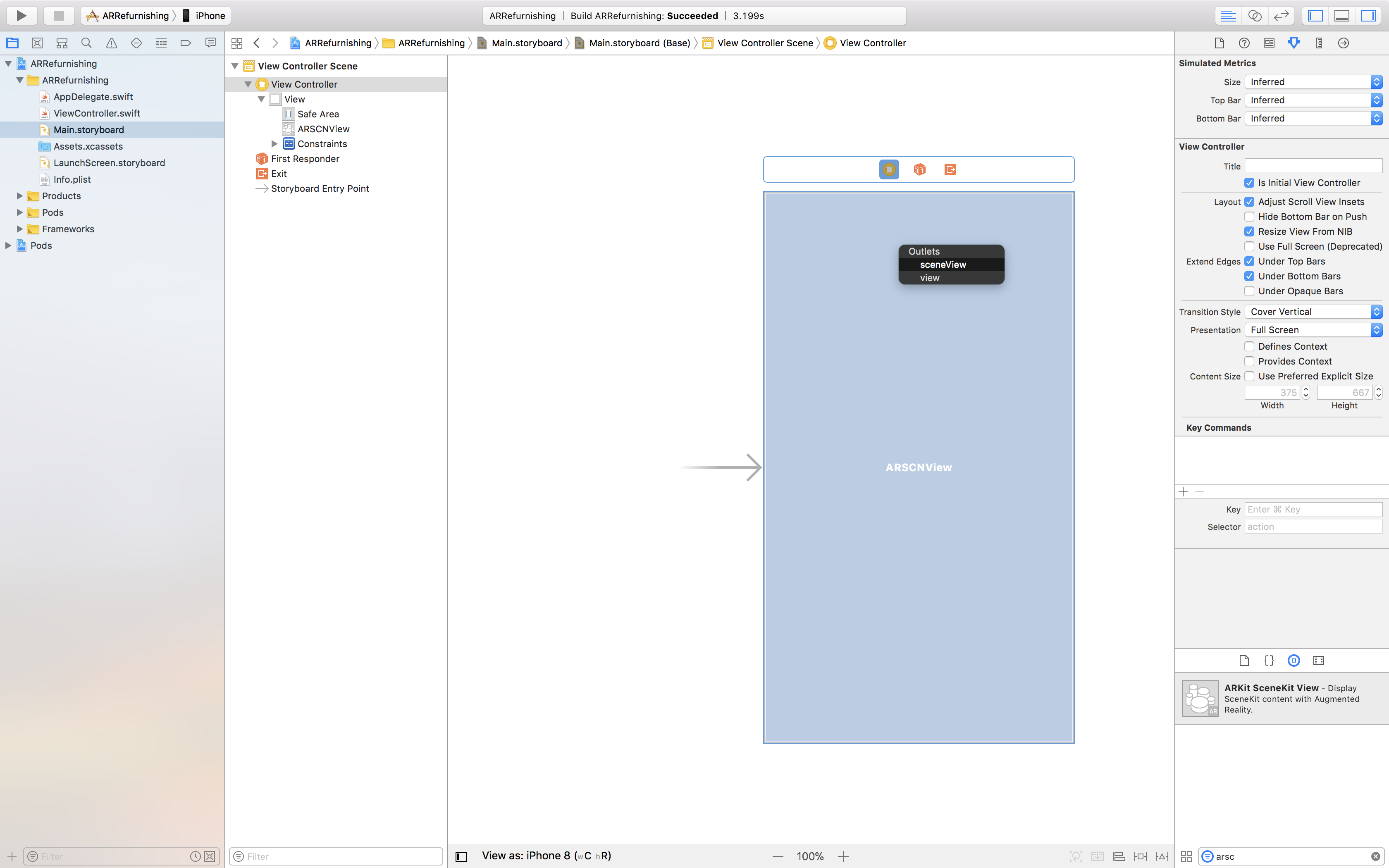

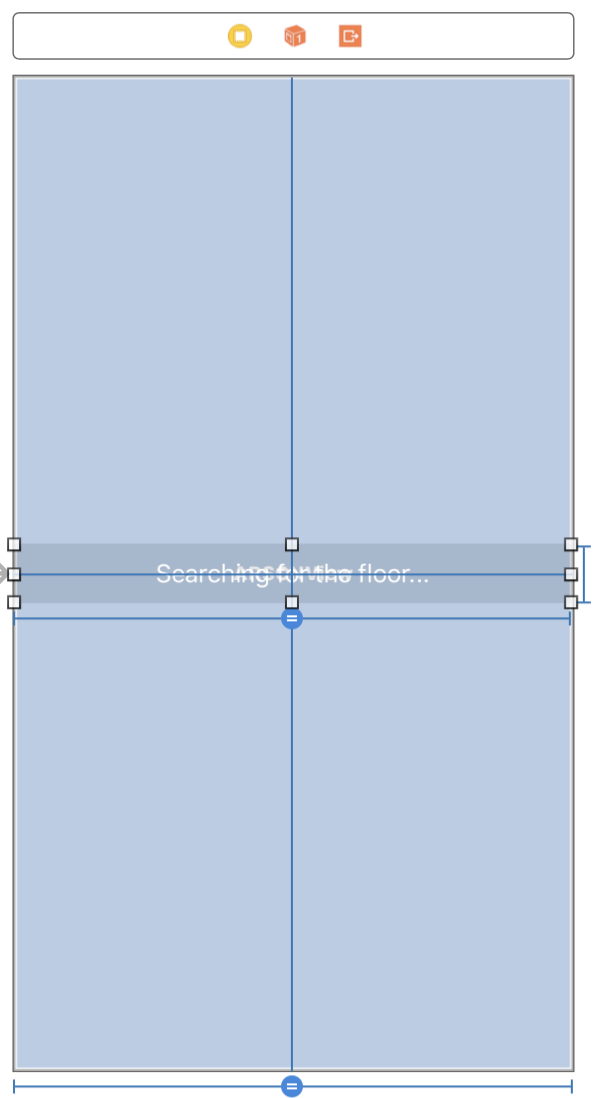

Now let's go to our storyboard and create our outlet. Add an ARSCNView as a subview of the main view controller, and add constraints so that the view fills the entire superview.

Finally connect our IBOutlet to the newly added view.

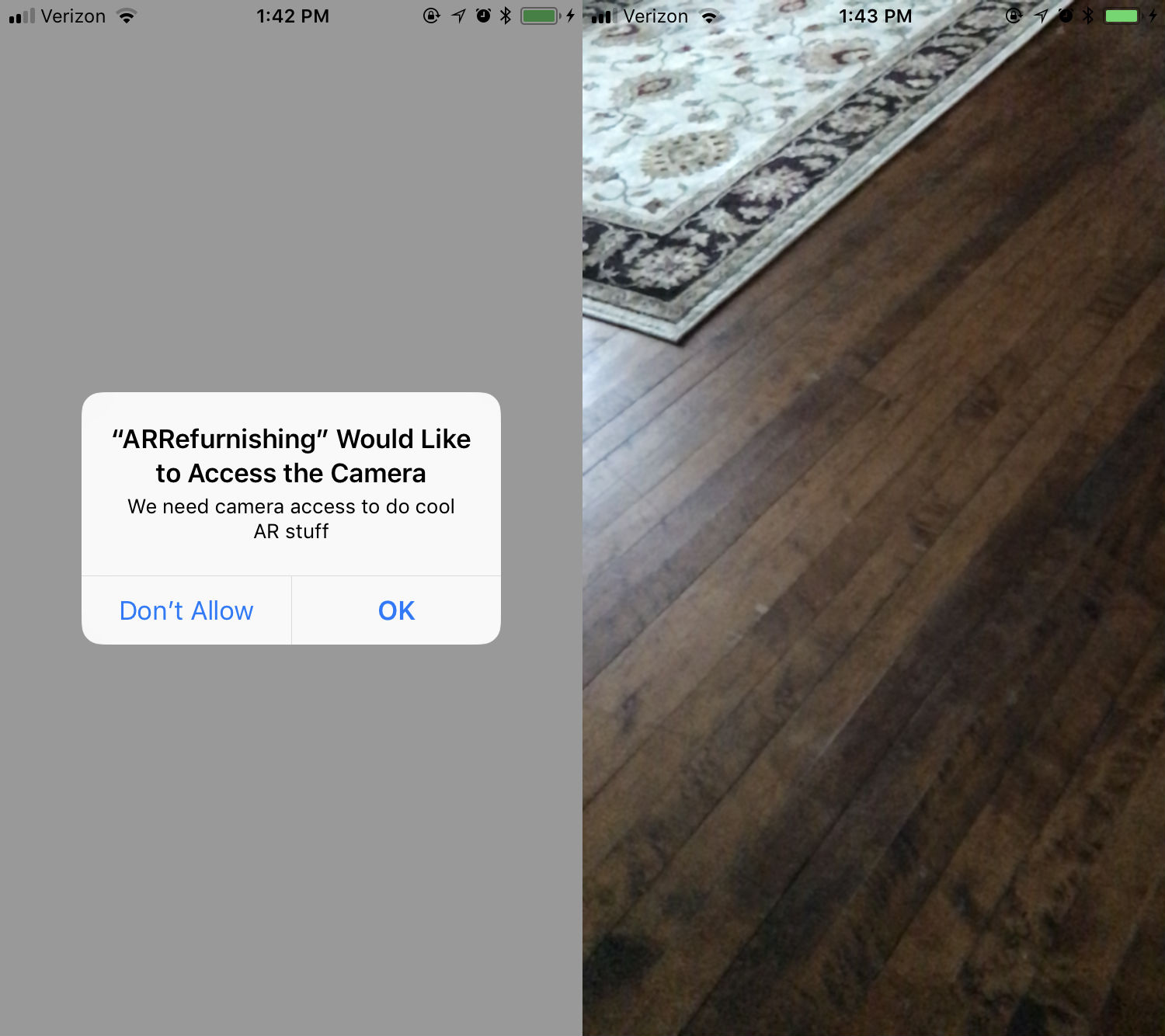

There's only one final step before we can get this application running. Go the Info.plist file and add a new key Privacy - Camera Usage Description with a description of why we need camera access.

Hit run. When the app launches we will see an alert asking for camera access. After selecting OK the camera will open.

Pretty cool, right? We've successfully created our first ARKit app! Except we don't have anything to show yet... But we will in a moment!

Plane Detection

The first step to adding objects to our scene to detect where the floor is. We are going to track the center of the screen and display a square on the floor where the user is looking. This will let them know where they are going to place the furniture. First we are going to create a custom SCNNode for the square. If you are unfamiliar with SceneKit then this code may be a little strange, however, all it does is subclass SCNNode and add a few line segments to it will a yellow color.

import SceneKit

class FocalNode: SCNNode {

let size: CGFloat = 0.1

let segmentWidth: CGFloat = 0.004

private let colorMaterial: SCNMaterial = {

let material = SCNMaterial()

material.diffuse.contents = UIColor.yellow

return material

}()

override init() {

super.init()

setup()

}

required init?(coder aDecoder: NSCoder) {

super.init(coder: aDecoder)

setup()

}

private func createSegment(width: CGFloat, height: CGFloat) -> SCNNode {

let segment = SCNPlane(width: width, height: height)

segment.materials = [colorMaterial]

return SCNNode(geometry: segment)

}

private func addHorizontalSegment(dx: Float) {

let segmentNode = createSegment(width: segmentWidth, height: size)

segmentNode.position.x += dx

addChildNode(segmentNode)

}

private func addVerticalSegment(dy: Float) {

let segmentNode = createSegment(width: size, height: segmentWidth)

segmentNode.position.y += dy

addChildNode(segmentNode)

}

private func setup() {

let dist = Float(size) / 2.0

addHorizontalSegment(dx: dist)

addHorizontalSegment(dx: -dist)

addVerticalSegment(dy: dist)

addVerticalSegment(dy: -dist)

// Rotate the node so the square is flat against the floor

transform = SCNMatrix4MakeRotation(-Float.pi / 2.0, 1.0, 0.0, 0.0)

}

}

Now that we have something to show, add an optional variable to our ViewController class for the focal node and a variable for the center of the screen.

var focalNode: FocalNode?

private var screenCenter: CGPoint!

Also, in viewDidLoad we are going to set the screenCenter variable.

override func viewDidLoad() {

super.viewDidLoad()

// Store screen center here so it can be accessed off of the main thread

screenCenter = view.center

// Remaining code in viewDidLoad

...

}

In our implementation of ARSCNViewDelegate we are going to create a focal node when the ARSession has found a plane, and move the focal node as the user moves the camera.

extension ViewController: ARSCNViewDelegate {

func renderer(_ renderer: SCNSceneRenderer, didAdd node: SCNNode, for anchor: ARAnchor) {

// If we have already created the focal node we should not do it again

guard focalNode == nil else { return }

// Create a new focal node

let node = FocalNode()

// Add it to the root of our current scene

sceneView.scene.rootNode.addChildNode(node)

// Store the focal node

self.focalNode = node

}

func renderer(_ renderer: SCNSceneRenderer, updateAtTime time: TimeInterval) {

// If we haven't established a focal node yet do not update

guard let focalNode = focalNode else { return }

// Determine if we hit a plane in the scene

let hit = sceneView.hitTest(screenCenter, types: .existingPlane)

// Find the position of the first plane we hit

guard let positionColumn = hit.first?.worldTransform.columns.3 else { return }

// Update the position of the node

focalNode.position = SCNVector3(x: positionColumn.x, y: positionColumn.y, z: positionColumn.z)

}

}

Now run the application and give the session a few moments to locate the floor; once a surface is detected a small yellow square should appear.

Notifying the User

While ARKit is searching for the floor it may be unclear to the user what is going on. So we are going to throw a label on the screen that displays while the app is searching for the floor, and then disappears when one is found. First, let's add a label to the center of our view, give it white text and a transparent gray background so that it is visible over any background. We'll just have the text say Searching for the floor...

Add an IBOutlet to the view controller and connect it in the storyboard.

@IBOutlet var searchingLabel: UILabel!

The label will show by default, but we want to make sure that it disappears when the floor is found. At the bottom of our func renderer(_ renderer: SCNSceneRenderer, didAdd node: SCNNode, for anchor: ARAnchor) implementation add an animation to make the label disappear.

// Hide the label (making sure we're on the main thread)

DispatchQueue.main.async {

UIView.animate(withDuration: 0.5, animations: {

self.searchingLabel.alpha = 0.0

}, completion: { _ in

self.searchingLabel.isHidden = true

})

}

Adding Something Real

Now that we are locating the floor and placing a marker where the user is looking, let's put a real 3D model in its place!

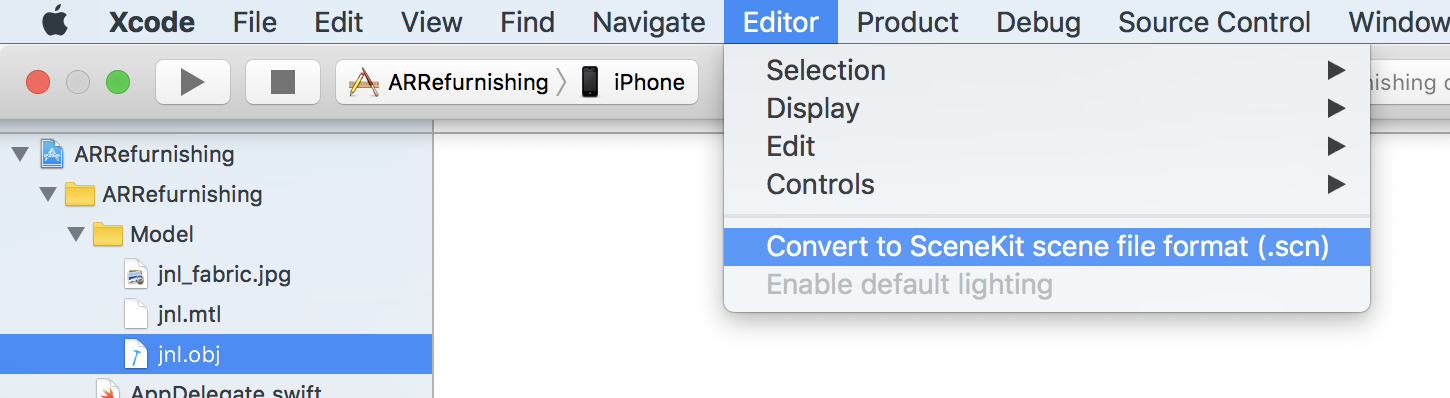

First we're going to want to download the model from Turbosquid. There will be three files that we need to get this model in our app, jnl.obj, jnl.mtl, and jnl_fabric.jpg. The obj file is the model itself, the mtl file tells us where the textures will be, and the jpg is the texture for the model. Drag these into your project. Select jnl.obj and go to Editor > Convert to SceneKit scene file format (.scn). This will give us a SceneKit file that we can easily put into our app.

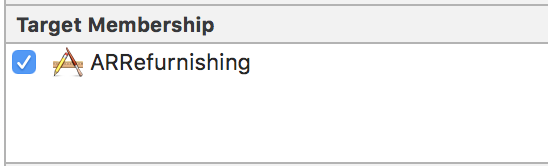

Sometimes when converting from obj to scn the file's target membership gets lost. So it's a good practice to double check the target membership and make sure that it is a part of our app.

Sometimes during conversion the textures do not copy over correctly. To remedy this we will open up the jnl.scn file and change the diffuse property on the right to be the image we imported (jnl_fabric.jpg). After that, our model should look less like a blob of color and more like a textured chair.

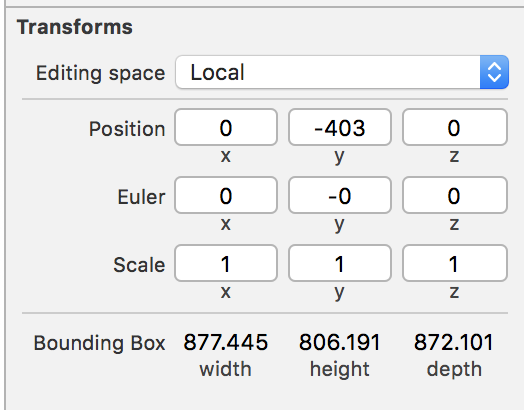

Since the model we are using is rather large, we are going to want to translate the model in the scene to be closer to the origin. We can see that the bounding box of the model has a height of around 806 pts, so to center the model correctly we want to translate the model down on the y-axis by half of the bounding box height. In the inspector for the model we can simply set the y-position to be -403.

Now our model is in our app! All we need to do now is grab it from the scene and place it in our app. Let's go to the ViewController and add a new private variable for storing the model.

private var modelNode: SCNNode!

At the end of viewDidLoad we are going to load the scene file and grab the model, which will be the root node of the scene. We also need to scale down the model because the dimensions of the model do not map to our real world dimensions 1-to-1. It may vary from model to model how much it will need to be scaled down, but we are creating a vector that scales all three vertices (x, y, and z) to 0.1%. Finally we are going to rotate the entire model 90 degrees along the x-axis so that the bottom of the chair is sitting on the floor.

// Get the scene the model is stored in

let modelScene = SCNScene(named: "jnl.scn")!

// Get the model from the root node of the scene

modelNode = modelScene.rootNode

// Scale down the model to fit the real world better

modelNode.scale = SCNVector3(0.001, 0.001, 0.001)

// Rotate the model 90 degrees so it sits even to the floor

modelNode.transform = SCNMatrix4Rotate(modelNode.transform, Float.pi / 2.0, 1.0, 0.0, 0.0)

Finally we want to add the model to our focal node. In renderer(_ renderer: SCNSceneRenderer, didAdd node: SCNNode, for anchor: ARAnchor), after we set the focal node, we will want to add the model as a child of that focal node.

func renderer(_ renderer: SCNSceneRenderer, didAdd node: SCNNode, for anchor: ARAnchor) {

// Code above...

let node = FocalNode()

node.addChildNode(modelNode)

// Code below...

}

Now when we run the application, we will see the chair model following the camera along with our focal node!

Now we need to place the chair in the room. We need to add a gesture recognizer to our scene view. At the end of viewDidLoad we will add a few lines of code.

func viewDidLoad() {

// Code above...

// Track taps on the screen

let tapGesture = UITapGestureRecognizer(target: self, action: #selector(viewTapped))

sceneView.addGestureRecognizer(tapGesture)

}

Then we will create a new method that responds to each tap and puts a new chair in the room if there is space for it. We start by checking to make sure we have already found the floor and placed a focal node there. If we have, then we translate where the center of the screen is in relation to the real world using hitTest. This tells us whether we have hit a spot on the floor; if we have, then we find the location in the real world that was tapped (via the worldTransform). Column 3 of the world transform matrix is the position that we are concerned about, so we can ignore the rest of the matrix. We will now make a clone of the model (so that it can move independently of the other nodes), and then set the position. Notice that this time we are setting the simdPosition. This is so the position is not effected by any transforms performed on the node. Then we add the new node to the scene, and we are done!

@objc private func viewTapped(_ gesture: UITapGestureRecognizer) {

// Make sure we've found the floor

guard focalNode != nil else { return }

// See if we tapped on a plane where a model can be placed

let results = sceneView.hitTest(screenCenter, types: .existingPlane)

guard let transform = results.first?.worldTransform else { return }

// Find the position to place the model

let position = float3(transform.columns.3.x, transform.columns.3.y, transform.columns.3.z)

// Create a copy of the model set its position/rotation

let newNode = modelNode.flattenedClone()

newNode.simdPosition = position

// Add the model to the scene

sceneView.scene.rootNode.addChildNode(newNode)

nodes.append(newNode)

}

Once the app is running and has found the floor we can tap anywhere on the screen and a new chair will be placed where the screen is looking. We can then walk around and see different angles of the chair as it stays exactly where we placed it.

Rearranging

Now that we are placing objects in the room, what happens if we don't like where we placed one of them? Wouldn't it be great if we could move around objects that we've already added to the scene? Well, good news! We just need to throw a few extra gesture recognizers on there and we can do it! Let's add two new gesture recognizers right below our tap gesture in viewDidLoad.

// Tracks pans on the screen

let panGesture = UIPanGestureRecognizer(target: self, action: #selector(viewPanned))

sceneView.addGestureRecognizer(panGesture)

// Tracks rotation gestures on the screen

let rotationGesture = UIRotationGestureRecognizer(target: self, action: #selector(viewRotated))

sceneView.addGestureRecognizer(rotationGesture)

Let's start by implementing the pan gesture recognizer. This will allow us to tap and pan on an object that is already in the scene and move it around. First, we'll make a helper method that finds a node in the scene based on a given location, excluding the focal node and model node.

private func node(at position: CGPoint) -> SCNNode? {

return sceneView.hitTest(position, options: nil)

.first(where: { $0.node !== focalNode && $0.node !== modelNode })?

.node

}

Now we can implement the viewPanned method. During a pan gesture we need to manage three different states: 1) when the gesture began, 2) when it is occurring, and 3) when it ends. When the gesture begins we want to find the node in the scene based on the tap location. When the gesture is changing we want to move the location of the selected node based on the position of the finger in the view (similar to how we decided where to place the node initially). Finally, when the gesture is completed we remove the reference to node so we do not move it accidentally.

private var selectedNode: SCNNode?

@objc private func viewPanned(_ gesture: UIPanGestureRecognizer) {

// Find the location in the view

let location = gesture.location(in: sceneView)

switch gesture.state {

case .began:

// Choose the node to move

selectedNode = node(at: location)

case .changed:

// Move the node based on the real world translation

guard let result = sceneView.hitTest(location, types: .existingPlane).first else { return }

let transform = result.worldTransform

let newPosition = float3(transform.columns.3.x, transform.columns.3.y, transform.columns.3.z)

selectedNode?.simdPosition = newPosition

default:

// Remove the reference to the node

selectedNode = nil

}

}

Implementing the rotation gesture is similar to the pan gesture, but we will also need to store the original rotation of the node. The gesture reports the total rotation since the start of the gesture, so if we add the total rotation each time it will grow exponentially, whereas if we add it to the original rotation it will remain linear.

private var originalRotation: SCNVector3?

@objc private func viewRotated(_ gesture: UIRotationGestureRecognizer) {

let location = gesture.location(in: sceneView)

guard let node = node(at: location) else { return }

switch gesture.state {

case .began:

originalRotation = node.eulerAngles

case .changed:

guard var originalRotation = originalRotation else { return }

originalRotation.y -= Float(gesture.rotation)

node.eulerAngles = originalRotation

default:

originalRotation = nil

}

}

When the gesture begins, we grab the node that is being selected and then we apply the rotation angle to the original rotation vector along the y-axis. We are only doing this along the y-axis because we only want the user to move the object in a circle vertically.

With these two gestures implemented we can now run the application and after an object is placed in the scene we can move it and rotate. Now we can fully furnish our homes with 3D models!

Conclusion

ARKit has put the power of augmented reality into the hands of every iOS developer. Combined with other power libraries, such as CoreML and Vision, Apple has created a powerful ecosystem for creating immersive augmented reality experiences. It will be exciting to see what developers will be able to accomplish with these new technologies in hand.